The AI video generation landscape has reached a pivotal moment in 2026. Two models now dominate the conversation: Seedance 2.0 from ByteDance and Sora 2 from OpenAI. Both represent significant leaps forward in video synthesis technology, yet they take fundamentally different approaches to solving the same creative challenges. This comprehensive comparison examines every dimension that matters—from technical specifications and output quality to pricing structures and real-world use cases—to help you understand which model delivers the capabilities you actually need.

What Makes Seedance 2 Different

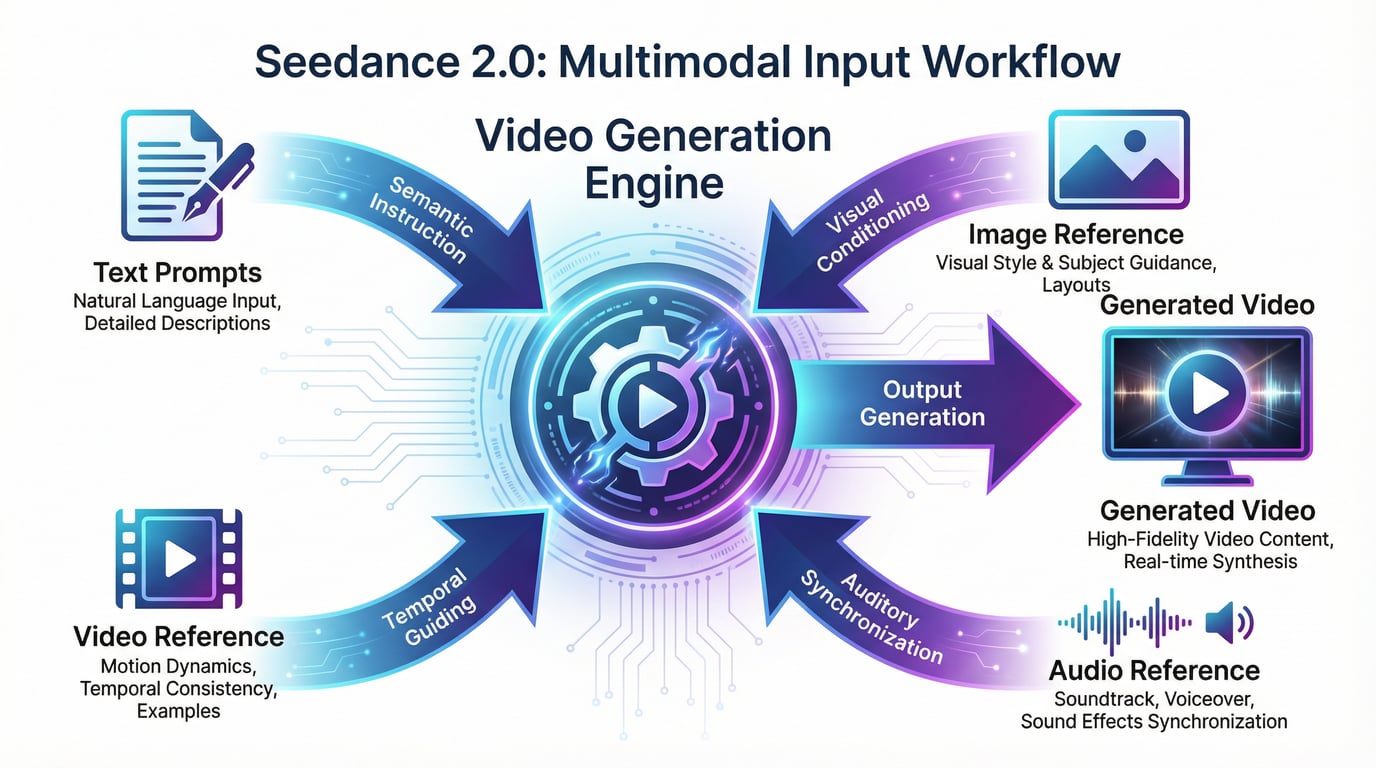

Seedance 2.0 introduces a unified multimodal architecture that fundamentally changes how creators interact with AI video generation. Unlike traditional text-to-video models that rely primarily on written prompts, Seedance 2.0 accepts four simultaneous input types: text descriptions, reference images, video clips, and audio files. This quad-modal reference system allows you to specify exactly what you want by showing the model examples rather than struggling to describe everything in words.

The practical implications are substantial. When you need a specific camera movement, you upload a reference video demonstrating that exact motion. When you want a particular visual style, you provide an image that captures that aesthetic. When you need audio synchronized to specific beats or rhythms, you supply the audio track directly. The model then combines these references according to your natural language instructions, giving you director-level control without requiring technical expertise in prompt engineering.

This multimodal approach solves one of the most persistent problems in AI video generation: the gap between creative intent and actual output. Previous models forced creators into a frustrating cycle of prompt refinement, hoping to stumble upon the magic combination of words that would produce the desired result. Seedance 2.0 eliminates much of that guesswork by allowing you to communicate through multiple channels simultaneously.

Technical Specifications: Where Each Model Excels

Resolution and Output Quality

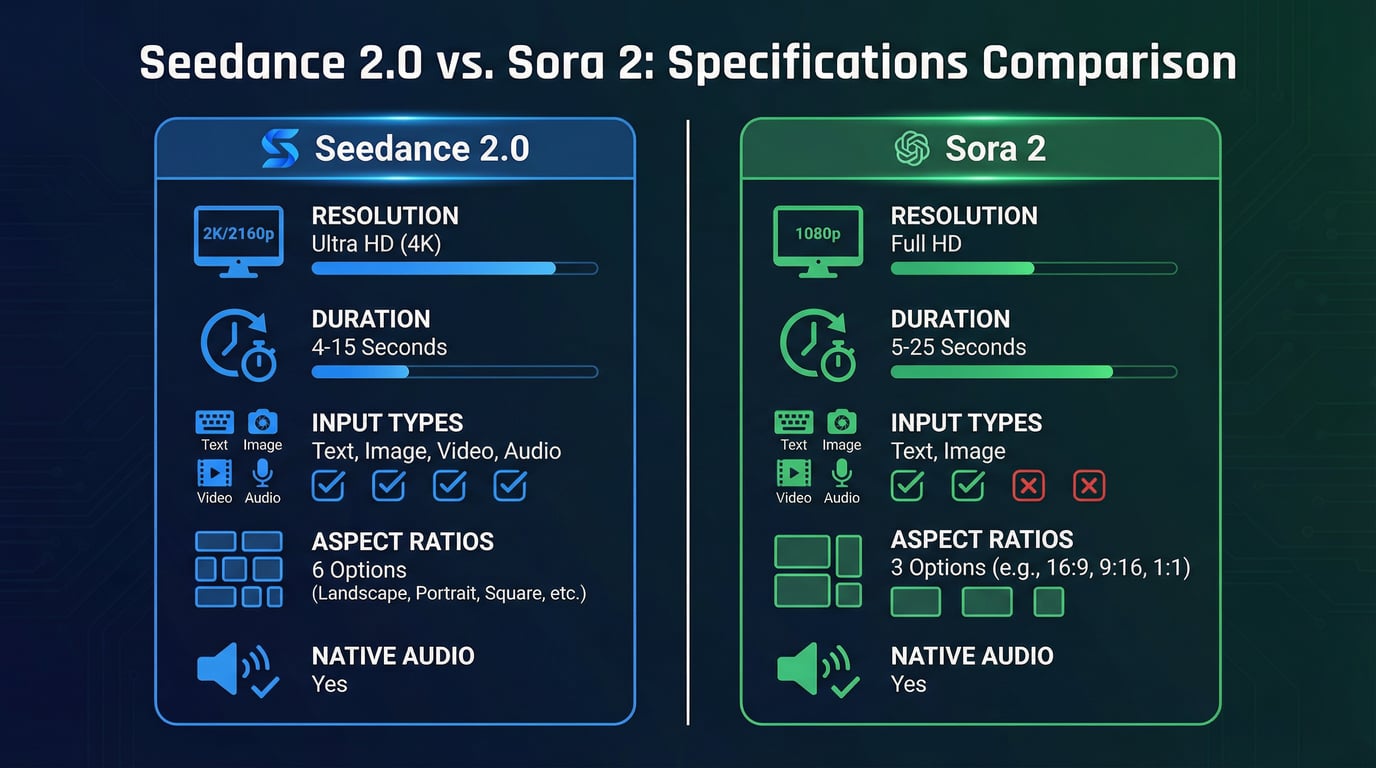

Seedance 2.0 delivers native 2K resolution at 2048×1152 pixels, positioning it as the highest-resolution option currently available in production AI video models. This resolution advantage matters significantly for content destined for large displays, high-definition advertising campaigns, or any application where visual fidelity directly impacts perceived quality. The model supports six aspect ratios: 16:9, 9:16, 4:3, 3:4, 21:9, and 1:1, covering virtually every common use case from YouTube videos to Instagram stories to ultra-wide cinematic formats.

Sora 2 maxes out at 1080p resolution, which remains professional-grade for most applications but falls short of Seedance 2.0's output fidelity. Where Sora 2 compensates is in its exceptional handling of lighting, texture detail, and color grading. The model demonstrates a sophisticated understanding of how light behaves in physical spaces, producing videos with cinematic depth and visual richness that sometimes surpasses higher-resolution competitors.

Video Duration and Temporal Consistency

Sora 2 holds a decisive advantage in video length, supporting generations from 5 to 25 seconds depending on the tier you access. The Pro version's 25-second capability represents a 4x increase over the original Sora model's 6-second limit, enabling complete narrative sequences without requiring multi-segment stitching. This extended duration makes Sora 2 particularly valuable for storytelling applications, product demonstrations, and any content that benefits from sustained narrative development.

Seedance 2.0 generates videos between 4 and 15 seconds, focusing on shorter high-impact clips optimized for social media, advertisements, and quick-cut editing workflows. While this shorter duration might seem limiting, it aligns perfectly with the dominant content formats on platforms like TikTok, Instagram Reels, and YouTube Shorts, where Seedance 2.0's ByteDance heritage shows through in its design priorities.

The model extends videos through a continuation system that maintains character and scene consistency across multiple generations. Testing reveals that the first 2-3 extensions preserve quality effectively, though noticeable degradation appears by the fourth extension, making this approach suitable for rough previews rather than final delivery.

Physics Simulation and Motion Realism

Sora 2 sets the industry benchmark for physics accuracy and cause-and-effect understanding. The model demonstrates remarkable capabilities in simulating complex physical interactions: Olympic gymnastics routines with accurate body mechanics, water dynamics that properly model buoyancy and fluid behavior, and fabric movement that respects material properties and gravitational forces. This physics-first approach produces motion that feels grounded in reality rather than artificially generated.

Independent testing confirms Sora 2's leadership in this dimension, with evaluators noting its superior handling of object permanence, realistic collision physics, and natural cause-and-effect relationships. The model maintains consistent character appearance and world state across extended durations, a critical capability for narrative content where continuity errors break immersion.

Seedance 2.0 takes a different approach, prioritizing motion smoothness and cinematic camera behavior over strict physical accuracy. The model excels at producing film-like camera movements—tracking shots, dolly zooms, crane movements—that feels professionally executed rather than mechanically generated. For content where visual style and emotional impact matter more than physical precision, Seedance 2.0's motion characteristics often produce more aesthetically pleasing results.

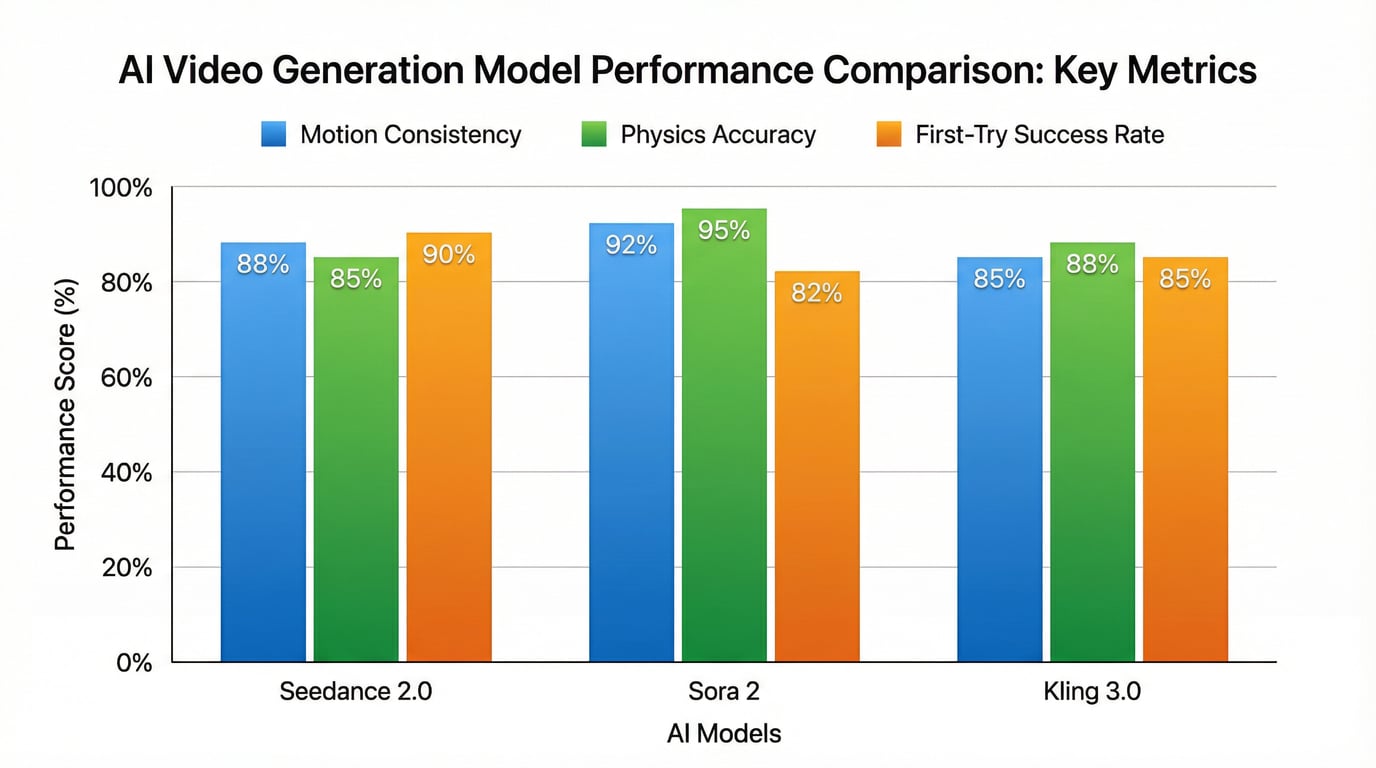

Testing reveals that Seedance 2.0 achieves approximately 90% usable output on the first generation attempt, dramatically reducing the trial-and-error workflow that plagued earlier AI video tools. This high success rate transforms video generation from an unpredictable lottery into a reliable production process.

The Multimodal Advantage: Seedance 2's Unique Capability

The most significant differentiator between these models lies in input flexibility. Seedance 2.0's quad-modal reference system represents a fundamental rethinking of how creators communicate with AI video models. You can upload up to 12 reference files across four categories, then use natural language to specify how the model should combine and apply these references.

This capability enables workflows that are simply impossible with text-and-image-only models. When creating a dance video, you upload the audio track for perfect beat synchronization, reference images for character appearance, and a video clip demonstrating the desired choreography style. The model synthesizes these inputs into a cohesive output that respects all your specifications simultaneously.

The audio reference capability deserves particular attention because it's unique to Seedance 2.0 among leading models. While Sora 2 generates synchronized audio as an output, it cannot accept audio as an input reference. This means you cannot specify a particular sound atmosphere, voice characteristic, or musical rhythm for Sora 2 to follow. Seedance 2.0's audio input support enables precise control over the sonic dimension of your videos, crucial for music videos, branded content with specific audio identities, and any application where audio-visual synchronization drives the creative concept.

Sora 2 currently supports text and image inputs only, focusing on generating both video and audio from these sources. This simpler input structure makes Sora 2 more straightforward to use for creators who prefer working primarily with written descriptions, but it sacrifices the granular control that multimodal references provide.

Native Audio Generation: Both Models Deliver

Both Seedance 2.0 and Sora 2 generate synchronized audio natively, eliminating the need for separate audio production workflows. This shared capability represents a major advancement over earlier AI video models that produced silent output requiring manual sound design.

Seedance 2.0 employs a dual-branch diffusion transformer architecture with separate processing paths for video and audio. This design ensures tight synchronization between visual events and their corresponding sounds—footsteps that align with foot strikes, door slams that match visual impacts, and ambient soundscapes that evolve naturally with scene changes. The audio generation system creates dialogue, environmental sounds, and sound effects that feel integrated with the visuals rather than artificially layered afterward.

Sora 2 similarly generates synchronized dialogue and sound effects with a high degree of realism. The model can create sophisticated background soundscapes, speech with natural prosody, and sound effects that respond appropriately to on-screen action. Testing reveals that Sora 2's audio quality matches or exceeds Seedance 2.0 in terms of fidelity and realism, though the lack of audio input reference means you have less direct control over the sonic characteristics.

Multi-Shot Narrative Capabilities

Seedance 2.0 introduces a narrative planning system that automatically breaks complex prompts into multi-shot sequences. Earlier AI video models attempted to cram entire stories into single continuous takes, resulting in temporal compression, warped motion, or ignored prompt elements when the description exceeded the model's capacity. Seedance 2.0's planner analyzes your prompt, identifies natural scene breaks, and generates a sequence of shots that together tell the complete story.

This multi-shot capability produces results that feel like edited sequences rather than raw single-take footage. The model maintains character consistency, visual style, and narrative continuity across shot boundaries, solving one of the most challenging problems in AI video generation. For creators producing narrative content, explainer videos, or any application requiring multiple perspectives or scene changes, this feature dramatically expands what's possible within a single generation.

Sora 2 maintains exceptional consistency across its longer single-shot durations but handles multi-shot sequences differently. The model excels at sustained single-perspective scenes with complex action, making it ideal for continuous narrative moments that benefit from unbroken temporal flow. For multi-shot sequences, creators typically generate separate clips and edit them together manually, which provides more precise control over transitions but requires additional production work.

Performance Benchmarks: Real-World Testing Results

Independent testing across multiple evaluation frameworks provides quantitative comparison data. In VBench evaluations—an authoritative benchmark for video generation quality—the performance gap between Open-Sora 2.0 (an open-source implementation approaching commercial Sora capabilities) and OpenAI's Sora has narrowed to just 0.69%, demonstrating that current-generation models have reached near-parity in measurable quality metrics.

Community testing reveals distinct performance profiles. Seedance 2.0 demonstrates superior motion coherence and camera dynamics, with objects and camera movements that feel natural and professionally executed. The model's 90%+ first-attempt success rate significantly outperforms earlier tools that required multiple generation attempts to achieve usable results.

Sora 2 leads in physics simulation accuracy and temporal consistency, particularly for scenes involving complex physical interactions, multiple characters, or extended narrative sequences. The model's understanding of cause-and-effect relationships and object permanence produces videos where the world behaves predictably and consistently throughout the clip.

For cinematic storytelling requiring smooth motion and sophisticated camera work, Seedance 2.0 demonstrates clear advantages in testing. For technically demanding scenes with fast action, complex physics, or extended durations, Sora 2 currently delivers more stable outputs.

Pricing and Accessibility: The Cost Factor

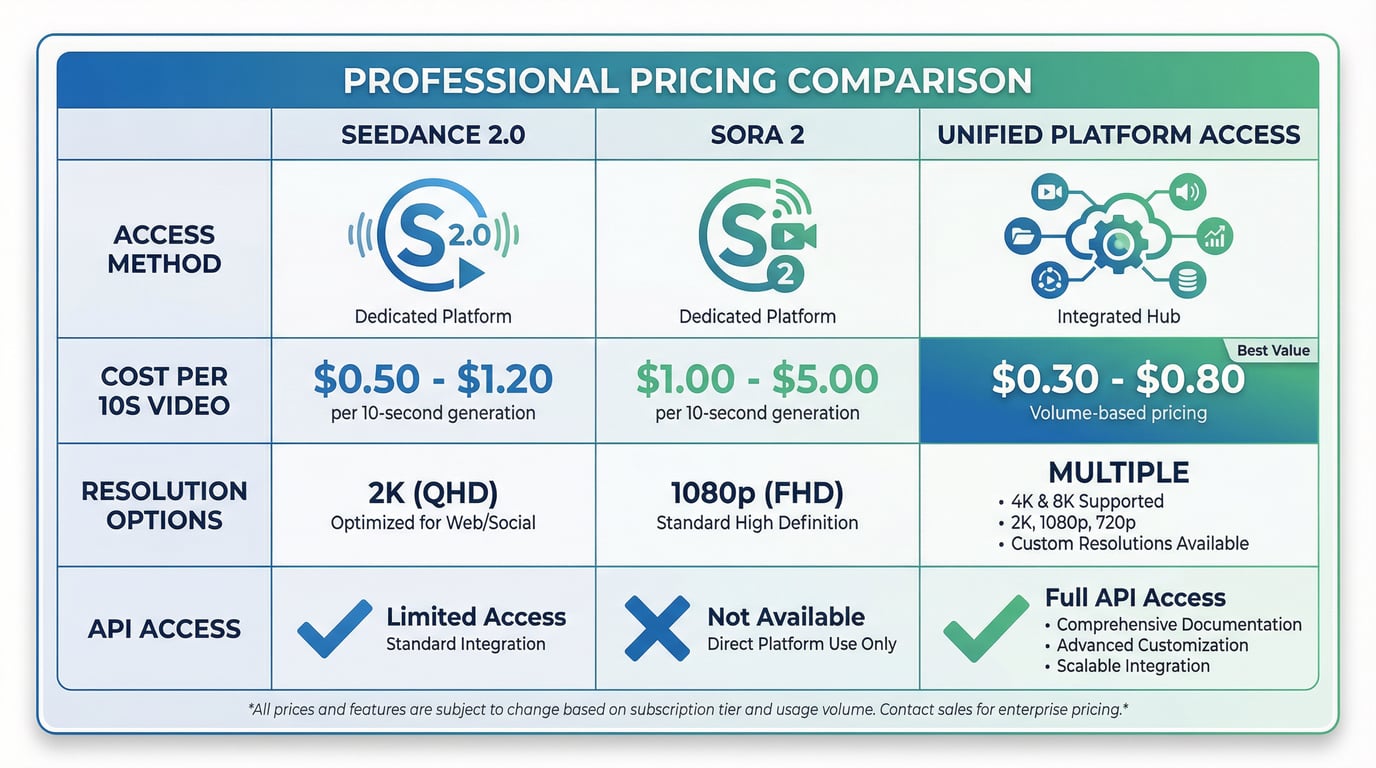

Pricing structures differ significantly between these models, reflecting different business strategies and target markets.

Sora 2 operates on a per-second billing model through OpenAI's API, charging $0.10 to $0.50 per second depending on resolution and tier. A typical 10-second video at standard resolution costs approximately $1.00, while Pro tier generations at maximum quality can reach $5.00 for the same duration. OpenAI also offers subscription access through ChatGPT Plus ($20/month) and ChatGPT Pro ($200/month), which provide credit-based generation with daily limits.

The subscription model provides better value for high-volume users who can utilize their daily credit allocations fully. ChatGPT Plus offers approximately 30 credits daily, translating to roughly 15-30 videos depending on duration and resolution settings. ChatGPT Pro provides 100+ credits daily, supporting professional production workflows with higher volume requirements.

Seedance 2.0 pricing varies by access method. The model is currently available primarily through ByteDance's Jimeng (Dreamina) platform, with API access expected to launch through Volcengine on February 24, 2026. Third-party providers offer Seedance 2.0 access at costs ranging from $0.50 to $1.20 per 10-second video, generally lower than Sora 2's official pricing but higher than some third-party Sora 2 resellers.

The cost equation extends beyond per-video pricing to include the success rate factor. Seedance 2.0's 90% first-attempt success rate means you typically achieve usable results without multiple generation attempts, effectively reducing your real-world cost per usable video. Models with lower success rates require budget allocation for retries and quality filtering, potentially doubling your effective costs even when the nominal per-video price appears lower.

Access Through Unified Platforms

Rather than managing separate accounts and APIs for each model, many creators access both Seedance 2.0 and Sora 2 through unified platforms that aggregate multiple AI video models. These platforms provide several advantages: single billing across all models, consistent interface design that reduces learning curves, and the ability to test different models on the same prompt for direct quality comparison.

Try Seedance 2 provides streamlined access to Seedance 2.0 alongside other leading video and image generation models. The platform eliminates the complexity of managing multiple API keys, navigating different pricing structures, and learning separate interfaces for each model. You can generate videos with Seedance 2.0, Sora 2, and other models from a single dashboard, comparing results directly to determine which model best serves each specific use case.

This unified approach proves particularly valuable for production workflows where different projects require different model strengths. Social media content might benefit from Seedance 2.0's multimodal control and high success rate, while narrative sequences might leverage Sora 2's extended duration and physics accuracy. Having both models accessible through a single platform allows you to match model capabilities to project requirements without switching between separate services.

Use Case Recommendations: Which Model for What

Choose Seedance 2.0 When:

You need maximum creative control through references. If you have specific visual styles, motion patterns, audio atmospheres, or camera movements you want to replicate, Seedance 2.0's multimodal reference system provides unmatched precision. Upload examples of what you want, describe how to combine them, and the model executes your vision with minimal prompt engineering.

You're producing high-volume social media content. The 4-15 second duration range aligns perfectly with TikTok, Instagram Reels, and YouTube Shorts formats. The 90% first-attempt success rate enables reliable production workflows where you need consistent output without extensive iteration. The native 2K resolution ensures your content looks sharp on all devices.

You require audio-visual synchronization with specific audio characteristics. Music videos, dance content, branded videos with signature sounds, and any application where audio drives the creative concept benefit from Seedance 2.0's audio reference input. You can specify exact beat patterns, vocal characteristics, or sound atmospheres that the model will match in its output.

You need maximum resolution for display or print applications. The native 2K output provides superior detail for large-screen displays, high-definition advertising, digital signage, and any context where visual fidelity directly impacts perceived quality.

You prioritize cinematic camera work and motion aesthetics. For content where visual style, smooth camera movements, and film-like motion characteristics matter more than strict physical accuracy, Seedance 2.0's motion profile produces more aesthetically pleasing results.

Choose Sora 2 When:

You need extended duration for narrative sequences. The 5-25 second range (depending on tier) enables complete story beats, product demonstrations with multiple features, or any content that benefits from sustained temporal development without requiring multi-clip editing.

Physical accuracy and realism are critical. For content depicting real-world physics, complex interactions, sports movements, or any application where unrealistic motion would undermine credibility, Sora 2's physics simulation capabilities deliver superior results.

You prefer straightforward text-to-video workflows. If you're comfortable with prompt engineering and don't need the complexity of managing multiple reference files, Sora 2's simpler input structure provides a more streamlined experience. The model's strong semantic understanding produces excellent results from well-crafted text descriptions alone.

You need maximum temporal consistency across long clips. Sora 2's ability to maintain character appearance, world state, and narrative continuity across 20-25 second generations makes it ideal for content where consistency errors would be immediately noticeable and problematic.

You're producing fantasy, abstract, or surreal content. Sora 2's creative interpretation of abstract concepts and its ability to generate imaginative scenarios that don't exist in the real world makes it particularly effective for artistic, experimental, or conceptual video content.

Technical Limitations and Considerations

Both models have constraints that impact their suitability for specific applications.

Seedance 2.0's shorter maximum duration requires multi-clip workflows for content exceeding 15 seconds. While the extension system maintains reasonable consistency for 2-3 iterations, quality degradation becomes noticeable beyond that point. This limitation makes Seedance 2.0 less suitable for single-take narrative sequences or content that benefits from unbroken temporal flow.

The multimodal reference system, while powerful, introduces complexity. Managing multiple reference files, understanding how the model combines different input types, and learning effective reference strategies requires more upfront investment than simple text-to-video workflows. The 12-file reference limit can feel restrictive for complex projects requiring numerous style, motion, and audio references.

Seedance 2.0 currently has limited accessibility outside ByteDance's ecosystem, with API access only recently becoming available through select platforms. This restricted availability has slowed adoption compared to more widely accessible alternatives.

Sora 2's 1080p maximum resolution falls short of Seedance 2.0's 2K output, potentially limiting its suitability for applications requiring maximum visual fidelity. The higher per-second pricing can make Sora 2 significantly more expensive for high-volume production, particularly when generating longer clips at premium quality settings.

Both models occasionally produce artifacts, morphing, or inconsistencies that require regeneration. Budget for 1.5x to 2x your expected generation volume to account for quality filtering and retries. Generation times typically range from 2-5 minutes per video depending on duration, resolution, and current server load, making real-time or near-real-time applications challenging.

The Broader Competitive Landscape

While Seedance 2.0 and Sora 2 dominate current discussions, they exist within a rapidly evolving competitive landscape. Google's Veo 3.1 offers broadcast-ready output with cinema-standard frame rates and strong performance in straightforward generation tasks. Runway's Gen-4 provides the most accessible developer tooling and precise motion control through brush-based interfaces. Kling 3.0 from Kuaishou delivers excellent value for straightforward prompt-to-video workflows, particularly for Asian subjects and environments.

Each model occupies a distinct position in the ecosystem. Sora 2 remains the name-brand leader in cinematic quality and physics simulation, though its higher cost and limited availability create opportunities for alternatives. Seedance 2.0 offers the most comprehensive control system for creators who know exactly what they want and can provide reference materials. Runway Gen-4 serves developers and technical users who prioritize API quality and integration flexibility. Kling 3.0 delivers reliable results at competitive prices for users who don't need advanced reference systems or maximum physics accuracy.

The rapid pace of development means that today's leader in any specific dimension may be surpassed within months. Seedance 2.0 launched in February 2026, Sora 2 stabilized its infrastructure throughout late 2025, and Runway Gen-4 expanded its API capabilities in early 2026—all within a compressed timeframe that suggests continued rapid iteration across all platforms.

Future Trajectory and Development Roadmap

The trajectory of AI video generation points toward several clear trends that will shape both models' evolution.

Resolution will continue increasing, with 4K output becoming standard rather than exceptional. Seedance 2.0 already supports up to 2160p (4K) depending on API tier and rate limits, suggesting that ultra-high-definition output will become widely accessible within the next generation of models.

Duration limits will extend further, enabling complete narrative sequences within single generations. The current 25-second maximum represents a 4x increase over earlier models, and this trend will likely continue until multi-minute continuous generations become feasible without quality degradation.

Multimodal capabilities will expand across all models. Seedance 2.0's quad-modal reference system demonstrates clear advantages in creative control, suggesting that competitors will adopt similar input flexibility. The ability to communicate creative intent through multiple channels simultaneously represents a fundamental improvement over text-only interfaces.

Physics simulation will improve across all models, narrowing the current gap between Sora 2's industry-leading accuracy and competitors' capabilities. As training datasets expand and model architectures evolve, realistic motion and physical interactions will become table stakes rather than differentiating features.

Real-time or near-real-time generation will emerge as infrastructure scales and model efficiency improves. Current 2-5 minute generation times limit certain applications; reducing this to seconds would enable entirely new use cases in live production, interactive content, and real-time creative tools.

Making the Decision: A Framework

Choosing between Seedance 2.0 and Sora 2 requires matching model capabilities to your specific requirements across several dimensions.

Evaluate your control requirements. If you have specific reference materials and need precise control over visual style, motion characteristics, and audio atmosphere, Seedance 2.0's multimodal system provides capabilities that Sora 2 cannot match. If you prefer simpler workflows and are comfortable achieving results through text prompts alone, Sora 2's streamlined approach may be more efficient.

Consider your duration needs. For content under 15 seconds, both models work effectively. For 15-25 second sequences, Sora 2 is your only option between these two. For content exceeding 25 seconds, both models require multi-clip workflows with manual editing.

Assess your physics accuracy requirements. If your content depicts real-world scenarios where unrealistic motion would be immediately noticeable—sports, complex interactions, cause-and-effect sequences—Sora 2's superior physics simulation justifies its higher cost. If visual style and aesthetic impact matter more than physical precision, Seedance 2.0's motion characteristics often produce more pleasing results.

Calculate your real-world costs. Factor in success rates, not just nominal per-video pricing. A model with 90% success rate at $1.00 per video costs effectively $1.11 per usable video. A model with 60% success rate at $0.80 per video costs $1.33 per usable video after accounting for failed generations. Seedance 2.0's higher first-attempt success rate often makes it more cost-effective despite comparable nominal pricing.

Consider your resolution requirements. For content destined for large displays, high-definition advertising, or applications where maximum visual fidelity matters, Seedance 2.0's 2K output provides a meaningful advantage. For standard web and social media applications, Sora 2's 1080p output remains fully professional.

Test both models on your actual use cases. Theoretical comparisons only go so far. Generate test videos with both models using prompts representative of your real projects. Evaluate the results against your specific quality standards, workflow requirements, and creative objectives. The model that performs better on your actual content matters more than which model wins in abstract benchmarks.

Conclusion: Complementary Tools, Not Direct Competitors

Seedance 2.0 and Sora 2 represent different philosophies about how AI video generation should work. Seedance 2.0 prioritizes creative control through multimodal references, enabling precise specification of visual style, motion characteristics, and audio atmosphere through examples rather than descriptions. Sora 2 emphasizes physics accuracy and extended temporal consistency, producing videos where the world behaves realistically across longer durations.

These different approaches make the models complementary rather than directly competitive. Professional workflows increasingly use multiple models, selecting the tool that best matches each specific project's requirements. Social media content might leverage Seedance 2.0's high success rate and multimodal control. Narrative sequences might use Sora 2's extended duration and physics simulation. Product demonstrations might alternate between models depending on whether the content emphasizes visual style or realistic product interaction.

The unified platform approach—accessing both models through services like Seedance 2—reflects this reality. Rather than committing exclusively to one model's ecosystem, creators benefit from having both tools available, choosing the right model for each specific task based on actual requirements rather than platform loyalty.

As AI video generation technology continues its rapid evolution, the gap between these models will narrow in some dimensions while new differentiating features emerge in others. What remains constant is the fundamental principle: match model capabilities to project requirements, test thoroughly on your actual use cases, and remain flexible enough to adopt new tools as they prove their value in production workflows.

The future of AI video generation isn't about finding the single best model—it's about building a toolkit of complementary capabilities that together enable creative visions that were impossible just months ago. Both Seedance 2.0 and Sora 2 deserve places in that toolkit, each excelling in the dimensions that matter most for different types of content.

Key Takeaways

| Dimension | Seedance 2.0 | Sora 2 |

|---|---|---|

| Resolution | 2K (2048×1152) | 1080p |

| Duration | 4-15 seconds | 5-25 seconds |

| Input Types | Text, Image, Video, Audio | Text, Image |

| Aspect Ratios | 6 options | 3 options |

| Physics Accuracy | Good | Excellent |

| Motion Aesthetics | Excellent | Good |

| First-Try Success | ~90% | ~82% |

| Best For | Social media, multimodal control, high-res output | Narrative sequences, physics simulation, extended duration |

| Pricing Range | $0.50-1.20 per 10s video | $1.00-5.00 per 10s video |

Ready to experience both models? Try Seedance 2 provides convenient access to Seedance 2.0, Sora 2, and other leading AI video and image generation models through a single unified platform—eliminating the complexity of managing multiple services while giving you the flexibility to choose the right tool for each project.