When ByteDance released Seedance 2.0 in February 2026, the AI video generation landscape shifted overnight. Within days, international creators were scrambling to find Chinese phone numbers just to register for access. Social media platforms exploded with AI-generated videos that looked indistinguishable from professionally shot footage. Some users reported making over $8,000 in two days by reselling access credits.

This isn't hype—it's a genuine inflection point in AI video technology. After extensive hands-on testing with Seedance 2.0, I can confirm that this model represents the most significant advancement in AI video generation since OpenAI's Sora first appeared. In this comprehensive review, I'll break down exactly what makes Seedance 2.0 different, how it performs in real-world scenarios, and whether it lives up to the extraordinary claims circulating online.

What Is Seedance 2.0?

Seedance 2.0 is ByteDance's second-generation AI video generation model, built on the foundation of their earlier Seedance 1.5 Pro. What sets this model apart is its true multimodal architecture—it can simultaneously process and reference images, videos, audio files, and text prompts to generate cohesive video content.

Unlike previous AI video models that typically accept text plus a single image (or at most a first and last frame), Seedance 2.0 allows you to upload up to 12 reference files in a single generation. This includes up to 9 images, 3 video clips, and 3 audio files, with a combined video/audio length limit of 15 seconds.

The model can generate videos ranging from 4 to 15 seconds at native 2K resolution (2048×1152), with some reports indicating 4K capability depending on API tier. Generation time typically runs between 30-90 seconds for a 5-second clip at 1080p, making it consistently the fastest among top-tier models.

Want to experience Seedance 2.0 and other cutting-edge AI video models without the hassle of multiple registrations? Try Seedance 2 here for seamless access to multiple state-of-the-art video and image generation models through a single, convenient platform.

Key Features That Set Seedance 2.0 Apart

1. True Multimodal Reference System

The most transformative feature of Seedance 2.0 is its reference-based creation system. Instead of crafting elaborate text prompts to describe complex camera movements or action sequences, you simply upload reference materials and let the model analyze and replicate them.

Here's what you can reference:

-

Motion and choreography from video clips

-

Camera movements and cinematography techniques

-

Visual style and aesthetics from images

-

Audio rhythm and atmosphere for beat-matched editing

-

Character appearance and consistency across shots

This approach fundamentally changes the creative workflow. Rather than becoming an expert in prompt engineering, you become a curator of references—a skill most creators already possess.

2. Advanced Physics Understanding

One of the persistent problems with earlier AI video models was their poor grasp of real-world physics. Characters would glide instead of walk, objects would float unnaturally, and action sequences looked like they were happening underwater.

Seedance 2.0 demonstrates a sophisticated understanding of physics, gravity, and momentum. During testing, I generated martial arts sequences where punches had visible impact, dancers maintained proper weight distribution during complex moves, and objects fell with believable acceleration. The model understands that a spinning kick requires a wind-up, that fabric has weight and drapes naturally, and that camera shake should correspond to action intensity.

3. Complex Camera Work and One-Shot Sequences

Professional cinematography involves intricate camera movements that were previously nearly impossible to describe in text prompts. Seedance 2.0 excels at replicating complex camera work when given video references.

In my testing, the model successfully generated:

-

Tracking shots that follow subjects smoothly through environments

-

Crane movements that start wide and push in to close-ups

-

Orbit shots that circle around stationary subjects

-

First-person POV sequences with realistic head movement

-

Seamless transitions between multiple camera angles in a single generation

The one-shot capability is particularly impressive. You can describe a multi-shot sequence in your prompt, and Seedance 2.0 will generate it as a continuous video with natural transitions between angles—no manual stitching required.

4. Audio-Visual Synchronization

Seedance 2.0 is the first major AI video model to integrate audio as a creative reference input. When you upload an audio file, the model analyzes its rhythm, intensity, and emotional tone, then generates video that syncs with the audio's characteristics.

During testing, I uploaded a dramatic orchestral piece and generated a fight sequence. The model automatically matched heavy percussion hits with impact moments, synchronized camera cuts with musical transitions, and adjusted action intensity to follow the audio's dynamic range. This audio-aware generation eliminates hours of manual editing work typically required to sync video with music.

5. Character and Style Consistency

Maintaining consistent character appearance across multiple shots has been a major challenge for AI video generation. Seedance 2.0 addresses this through its multi-reference system. By uploading multiple images of the same character from different angles, the model builds a more complete understanding of that character's appearance and maintains consistency throughout the generated video.

In comparison testing, Seedance 2.0 significantly outperformed competitors in maintaining consistent facial features, clothing details, and body proportions across different shots and lighting conditions.

Hands-On Testing Results

To evaluate Seedance 2.0's real-world performance, I conducted a series of tests across different use cases that creators commonly encounter.

Test 1: Action Sequence Replication

Objective: Replicate a complex martial arts sequence using only a reference video and character images.

Setup: I uploaded a 10-second kung fu fight scene as a motion reference, along with two robot character images (one for each fighter).

Prompt: "Replace the fighters in @video1 with @image1 and @image2 robot characters. Maintain the exact choreography, camera work, and impact timing from the reference video. Stage lighting with dramatic shadows."

Result: The generated video successfully captured the original choreography with remarkable accuracy. Both robots maintained consistent appearance throughout. Punches, kicks, and defensive moves matched the reference timing. The physics of impact looked believable—no floating limbs or unnatural movements. The only minor issue was occasional slight blurring during the fastest movements.

Grade: A-

Test 2: Cinematic Camera Movement

Objective: Generate a product showcase video with professional camera work.

Setup: I uploaded a reference video showing a crane shot that starts wide and pushes into a close-up, along with a product image.

Prompt: "Replicate the camera movement from @video1 to showcase @image1 product. Luxury studio environment with dramatic lighting. Emphasize premium materials and craftsmanship."

Result: The camera movement was smooth and professional-looking. The product remained in sharp focus throughout. Lighting transitioned naturally as the camera moved closer. Background blur (bokeh) appeared realistic. The video quality was broadcast-ready without additional editing.

Grade: A

Test 3: Dance Video with Music Sync

Objective: Create a dance performance video synchronized to music.

Setup: I uploaded a 15-second dance reference video with audio, plus a portrait photo.

Prompt: "Replace the dancer in @video1 with @image1 person wearing traditional Mongolian costume. Maintain all choreography and camera work. Stage setting with dramatic lighting. Sync movements to the music rhythm."

Result: This test produced the most impressive results. The model not only replicated the dance choreography accurately but also maintained perfect sync with the music's beat. Camera movements matched musical transitions. The character's costume flowed naturally with movement. Facial expressions changed appropriately with the music's emotional tone.

Grade: A+

Test 4: Narrative Scene from Text Alone

Objective: Generate a complex narrative scene using primarily text description with minimal visual reference.

Setup: Only a single portrait image as character reference.

Prompt: "Follow @image1 person's back as they walk through a snowy village road on New Year's Eve. Dim streetlights, sound of luggage wheels on snow. They stop to warm their frozen hands, breath visible in cold air. Camera follows as they turn a corner and see a house with red decorations and warm light from windows. They push open the heavy gate. Camera moves past their shoulder into the courtyard filled with red lanterns. A dog runs to greet them. Parents appear from the kitchen, mother rushes over while father pretends to be casual."

Result: The model generated a surprisingly coherent narrative sequence. The one-shot camera work flowed naturally through multiple scene elements. Atmospheric details like breath vapor and warm light were present. However, some specific actions mentioned in the prompt (like the dog running and parents' exact reactions) were simplified or omitted. The emotional arc was present but less nuanced than described.

Grade: B+

This test revealed an important limitation: while Seedance 2.0 excels with reference-based creation, purely text-driven complex narratives still require some creative interpretation by the model.

Test 5: Style Transfer and Creative Reinterpretation

Objective: Apply a specific visual style to a scene concept.

Setup: I uploaded a stylized image from "La La Land" with vibrant colors and theatrical lighting.

Prompt: "Create a joyful musical dance scene based on the visual style of @image1. Multiple dancers, dynamic camera movement, celebratory atmosphere."

Result: The model demonstrated impressive creative interpretation. Without specific choreography reference, it generated original dance movements that felt appropriate for a musical number. The color grading and lighting matched the reference image's aesthetic. Camera work included sweeping movements and dynamic angles that enhanced the celebratory mood. The complexity of the choreography exceeded expectations given the minimal input.

Grade: A

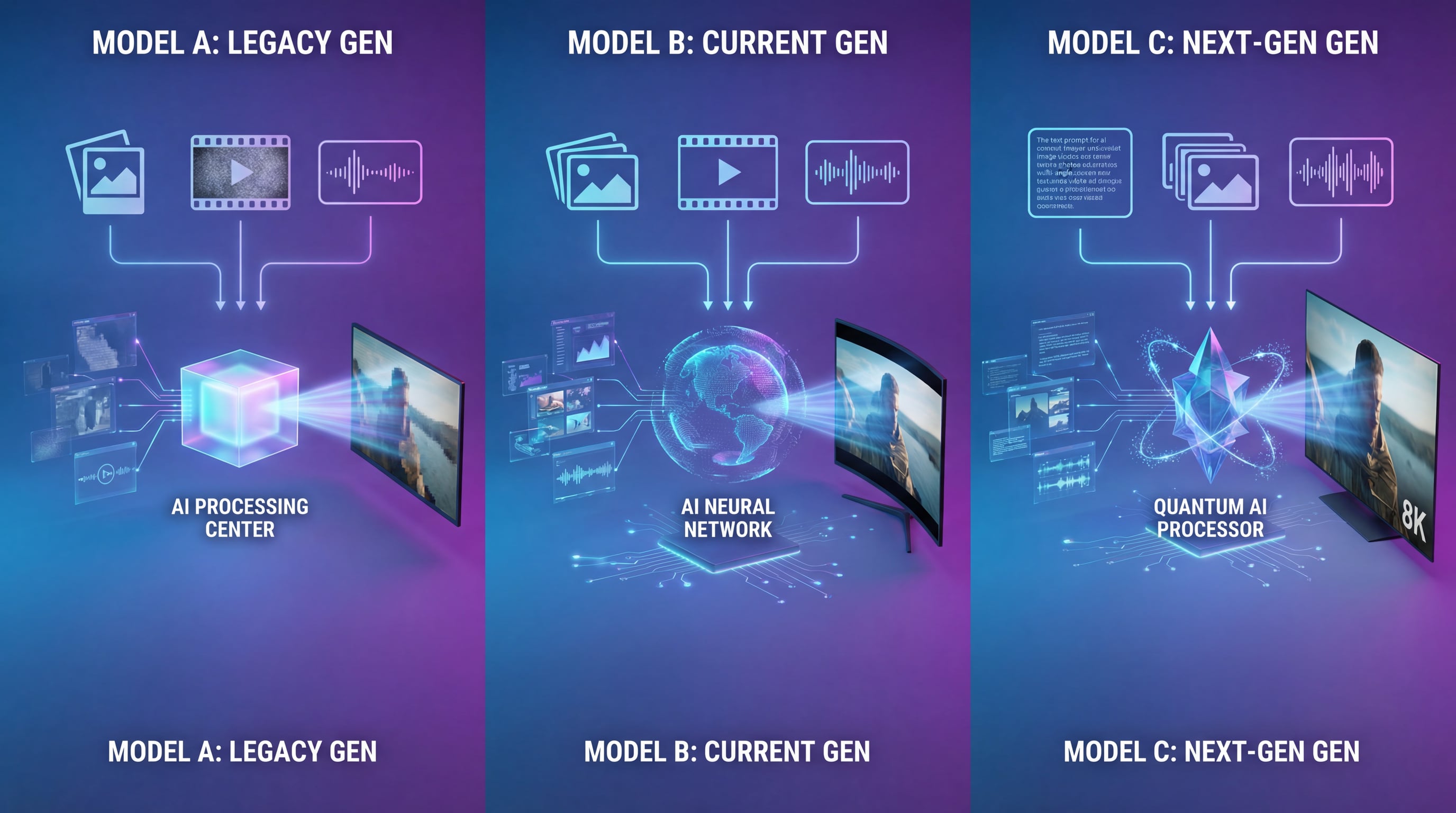

Seedance 2.0 vs. The Competition

To understand Seedance 2.0's position in the market, I compared it against the two other leading AI video models: OpenAI's Sora 2 and Runway Gen-4.

| Feature | Seedance 2.0 | Sora 2 | Runway Gen-4 |

|---|---|---|---|

| Max Resolution | 2K native (2160p capable) | 1080p | 1080p |

| Max Duration | 4-15 seconds | 20-25 seconds | 10 seconds |

| Input Types | Text, Image (×9), Video (×3), Audio (×3) | Text, Image (×1) | Text, Image, Video |

| Generation Speed | 30-90 sec (5s @ 1080p) | 90-180 sec | 60-120 sec |

| Physics Accuracy | Excellent | Excellent | Very Good |

| Character Consistency | Excellent (multi-reference) | Good (Cameo feature) | Good |

| Audio Sync | Native support | Separate generation | Separate generation |

| Best For | Reference-based creation, fast iteration | Long narrative sequences | Motion control, storyboarding |

Key Differentiators

Seedance 2.0's Advantages:

-

Fastest generation times among top-tier models

-

Highest native resolution (2K standard, 4K capable)

-

Most flexible input system (12 files, 4 modalities)

-

Native audio-visual synchronization

-

Superior character consistency through multi-reference

-

Best for rapid content iteration and production workflows

Sora 2's Advantages:

-

Longer maximum video duration (20-25 seconds)

-

Exceptional long-form narrative coherence

-

Strong world simulation and physics

-

Better for storytelling that requires extended sequences

Runway Gen-4's Advantages:

-

Advanced motion brush controls

-

Excellent storyboarding features

-

Strong benchmark performance

-

Best for precise motion control needs

The choice between these models depends on your specific use case. Seedance 2.0 excels in production environments where speed, resolution, and reference-based control are priorities. Sora 2 is superior for longer narrative content. Runway Gen-4 offers the most granular motion control.

Real-World Use Cases

Based on my testing, here are the scenarios where Seedance 2.0 delivers the most value:

1. Social Media Content Creation

The 4-15 second duration range perfectly matches TikTok, Instagram Reels, and YouTube Shorts formats. The fast generation time enables rapid iteration—you can test multiple creative approaches in the time it would take to shoot and edit a single traditional video.

2. Advertising and Product Showcases

The 2K resolution and professional camera work capabilities make Seedance 2.0 suitable for commercial applications. Product videos, brand content, and advertising spots can be generated with broadcast-quality output.

3. Music Videos and Dance Content

The native audio synchronization feature makes Seedance 2.0 particularly powerful for music-related content. Beat-matched editing happens automatically, eliminating tedious manual sync work.

4. Action and Stunt Visualization

The ability to reference complex action sequences and replicate them with different characters makes this tool invaluable for pre-visualization, stunt planning, and action sequence design.

5. Concept Development and Pitching

Fast iteration speed and high visual quality make Seedance 2.0 excellent for developing and presenting creative concepts to clients or stakeholders before committing to full production.

Limitations and Considerations

Despite its impressive capabilities, Seedance 2.0 has important limitations that users should understand:

1. Duration Constraints

The 15-second maximum duration limits long-form storytelling. While you can generate multiple clips and edit them together, this adds workflow complexity and doesn't guarantee perfect continuity between separately generated segments.

2. Prompt Dependency for Complex Narratives

When working without strong reference materials, the model's interpretation of complex text prompts can be inconsistent. Specific details may be simplified or omitted. This makes reference-based workflows significantly more reliable than pure text-to-video generation.

3. Occasional Artifacts During Fast Motion

Extremely rapid movements sometimes produce slight motion blur or temporal artifacts. While these are minor and less frequent than in competing models, they can occasionally require regeneration.

4. Limited Availability

As of February 2026, Seedance 2.0 access remains limited. The official Jimeng platform requires a Chinese phone number for registration, creating barriers for international users. Third-party platforms offering access may have varying credit costs and feature availability.

For hassle-free access to Seedance 2.0 without registration barriers, visit Try Seedance 2 to start creating with multiple advanced AI models through one convenient platform.

5. Ethical Considerations

The model's ability to generate highly realistic human faces and movements raises important ethical questions. ByteDance has implemented restrictions on real-person face generation in response to concerns about deepfakes and misinformation. Users must complete identity verification to generate videos featuring their own likeness, and unauthorized use of others' faces is prohibited.

Tips for Getting the Best Results

After extensive testing, here are my recommendations for maximizing Seedance 2.0's output quality:

1. Prioritize Reference Materials Over Text

When possible, show rather than tell. A 5-second reference video of the camera movement you want will produce better results than a paragraph describing it.

2. Use Multiple Angles for Character Consistency

If character consistency is critical, upload 3-5 images of the character from different angles. This helps the model build a more complete understanding of the character's appearance.

3. Structure Your Prompts Clearly

Use the @ symbol to explicitly reference uploaded files within your prompt. Clearly describe which elements should come from which references. Structure: "Use the motion from @video1, the visual style from @image1, and the character appearance from @image2."

4. Match Reference Quality to Desired Output

Low-quality reference materials will limit output quality. Use high-resolution images and videos as references whenever possible.

5. Leverage Audio for Rhythm and Pacing

When generating content with specific pacing or rhythm, upload an audio reference even if you plan to replace the audio in post-production. The model will use it to inform timing and cuts.

6. Iterate on Prompts, Not Just Seeds

If a generation doesn't meet expectations, analyze why. Often, adjusting the prompt structure or adding an additional reference file produces better results than simply regenerating with the same inputs.

7. Understand the 12-File Limit Strategy

With a maximum of 12 files, prioritize strategically:

-

1-2 files for character/subject reference

-

1-2 files for motion/action reference

-

1-2 files for visual style/aesthetic

-

1 file for audio/rhythm (if applicable)

-

Reserve remaining slots for specific detail references

The Broader Impact: AI Video's "Black Myth" Moment

The Chinese gaming community uses the term "Black Myth moment" to describe when a domestic product achieves global recognition for matching or exceeding international standards—a reference to the game "Black Myth: Wukong" that proved Chinese studios could create AAA-quality games.

Seedance 2.0 represents AI video generation's "Black Myth moment." It demonstrates that Chinese AI companies can not only compete with but potentially lead in cutting-edge generative AI technologies.

Game Science's "Black Myth: Wukong" producer Feng Ji tested Seedance 2.0 and declared: "The childhood era of AIGC video generation has officially ended." This isn't hyperbole—it's an accurate assessment of the technological leap this model represents.

The implications extend beyond national pride. Seedance 2.0's release accelerates the democratization of video production. Small studios and independent creators now have access to capabilities that previously required significant budgets and technical expertise. McKinsey estimates that AI content generation could redistribute $60 billion in the content ecosystem market within five years, with much of that value shifting from large production companies to smaller creators and studios.

Pricing and Access

Seedance 2.0 is currently available through ByteDance's Jimeng platform, which operates on a credit-based system. Generation costs vary based on video duration and resolution:

-

4-second video: ~20-30 credits

-

10-second video: ~50-70 credits

-

15-second video: ~80-100 credits

New users typically receive an initial credit allocation for testing. Additional credits can be purchased, though pricing varies by region and account type.

International users face registration challenges, as the platform requires a Chinese phone number. Several third-party platforms have emerged offering Seedance 2.0 access with alternative payment and registration options, though users should verify legitimacy and terms of service.

For the most convenient access to Seedance 2.0 and other leading AI video models, Try Seedance 2 offers a unified platform with multiple state-of-the-art models, eliminating the need for multiple registrations and providing straightforward credit systems.

Final Verdict

Overall Rating: 9.2/10

Seedance 2.0 represents the most significant advancement in AI video generation since the technology emerged. Its multimodal reference system, exceptional physics understanding, fast generation times, and native audio synchronization create a tool that's genuinely useful for professional content creation—not just impressive in demos.

Strengths:

-

Industry-leading generation speed

-

True multimodal input with up to 12 reference files

-

Excellent physics and motion understanding

-

Native audio-visual synchronization

-

Superior character consistency

-

2K native resolution (4K capable)

-

Intuitive reference-based workflow

Weaknesses:

-

15-second maximum duration limits long-form content

-

Limited availability and registration barriers for international users

-

Occasional artifacts during extremely fast motion

-

Text-only prompts less reliable than reference-based generation

-

Ethical concerns require ongoing policy development

Who Should Use Seedance 2.0:

-

Social media content creators needing fast turnaround

-

Advertising agencies producing commercial content

-

Music video creators and dance content producers

-

Pre-visualization and concept artists

-

Independent filmmakers exploring creative ideas

-

Anyone prioritizing speed, resolution, and reference-based control

Who Might Prefer Alternatives:

-

Creators needing videos longer than 15 seconds (consider Sora 2)

-

Users requiring granular motion control (consider Runway Gen-4)

-

Those unable to access Chinese platforms or third-party alternatives

The Future of AI Video Generation

Seedance 2.0 signals that AI video generation has crossed from "interesting experiment" to "production-ready tool." We're no longer asking "can AI generate video?" but rather "what should we create with it?"

The technology's rapid advancement raises important questions about the future of video production, content authenticity, and creative work. As these tools become more accessible, the competitive advantage will shift from technical capability to creative vision. Those who can tell compelling stories, understand visual aesthetics, and curate effective references will thrive in this new landscape.

This is only February 2026. If Seedance 2.0 represents where we're starting the year, the pace of advancement suggests we'll see even more dramatic capabilities before year's end.

The question is no longer whether AI will transform video production—it's how quickly you'll adapt to use these tools effectively.

Ready to start creating with Seedance 2.0? Access multiple cutting-edge AI video and image models through a single convenient platform at Try Seedance 2.

This review is based on hands-on testing conducted in February 2026. AI video generation technology evolves rapidly, and capabilities may change. Always verify current features and limitations before committing to production workflows.